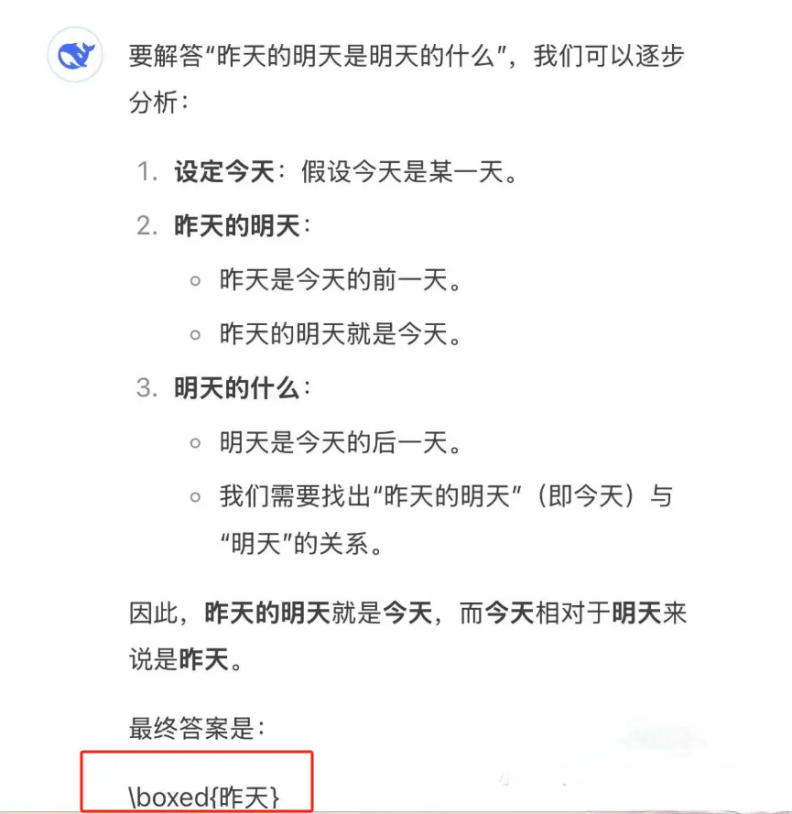

After the explosive growth, stability has become a necessity.

Image source: Generated by Wujie AI

Behind the busy responses of DeepSeek's services lies not just the anxious waiting of ordinary users; when the API interface response exceeds the critical threshold, the world of DeepSeek developers also experiences a continuous oscillating butterfly effect.

On January 30, Lin Sen, an AI developer from Base Beijing who integrated DeepSeek, suddenly received an alert from the program's backend. Just a few days after celebrating DeepSeek's breakout, Lin Sen's program was forced to shut down for three days due to the inability to call DeepSeek.

At first, Lin Sen thought this was due to insufficient balance in his DeepSeek account. It wasn't until February 3, after returning to work from the Spring Festival holiday, that he finally received a notification about the suspension of API recharge for DeepSeek. At this point, despite having sufficient balance in his account, he could no longer call DeepSeek.

On the third day after Lin Sen received the backend notification, DeepSeek officially announced on February 6 that it would suspend API service recharges. Nearly half a month later, as of February 19, the API recharge service on the DeepSeek open platform had still not returned to normal.

Caption: The DeepSeek developer platform has not yet resumed recharges. Image source: Screenshot from Wujie News

Realizing that the backend shutdown was due to DeepSeek's server overload, and feeling "abandoned" as a developer who had not received any prior notice or after-sales maintenance service for several days, Lin Sen expressed his frustration.

"It's like a small shop at your doorstep where you are a regular customer, have a membership card, and have always had a good relationship with the owner. Suddenly, one day, the small shop is rated as a Michelin restaurant, and the owner abandons the regular customers, no longer recognizing the membership cards." Lin Sen described.

As one of the first batch of developers to deploy DeepSeek in July 2023, Lin Sen was excited about DeepSeek's breakout, but now, to maintain operations, he could only switch to ChatGPT, as "ChatGPT, although a bit more expensive, is at least stable."

When DeepSeek transformed from a word-of-mouth small shop into a trendy Michelin restaurant, more developers like Lin Sen, who found themselves unable to access the service, began to flee DeepSeek.

In June 2024, the Xiaochuang AI Q&A machine integrated DeepSeek V2 in its early product stage. Xiaochuang partner Lou Chi was quite impressed that at that time, DeepSeek was the only large model capable of reciting "The Yueyang Tower" without error. Therefore, the team used DeepSeek to take on one of the core functional roles of the product.

However, for developers, DeepSeek, while good, has always had issues with stability.

Lou Chi told Wujie News (ID: wujicaijing) that during the Spring Festival, not only were C-end users busy accessing the service, but developers also often found themselves unable to call DeepSeek. The team decided to choose several large model platforms that had already integrated DeepSeek for simultaneous calls.

After all, "there are now dozens of platforms with a full version of DeepSeek R1." Using these large model platforms' R1, combined with Agent and Prompt, can also meet user needs.

To attract the developer community that has overflowed from DeepSeek, leading cloud vendors have begun frequently hosting events for developers, "Participate in the event and get free computing power; if not called in bulk, small developers can almost use it for free," said Yang Huichao, Technical Director of Yibiao AI.

However, with DeepSeek's current popularity, as the first batch of developers flee, more developers are still flocking in, hoping to benefit from the traffic dividends of the former.

The project launched by Qi Jian involves using DeepSeek's API for a role-playing AI companion app, which gained about 3,000 active users in its first week after launching on February 2.

Although some users reported errors when calling DeepSeek's API, 60% of users have expressed a desire for Qi Jian to quickly release an Android version. In Qi Jian's social media backend, there are at least dozens of users messaging daily for download links, making "the AI companion platform built on DeepSeek" undoubtedly a new label for the app's breakout.

According to statistics from Wujie News, the list of various apps integrated with DeepSeek on its official website had only 182 entries before 2025, but has now expanded to 488 entries.

On one hand, DeepSeek has become a "shining light of domestic products," attracting 100 million users in just seven days; on the other hand, the first batch of developers deployed on DeepSeek are turning to other large models due to service congestion caused by overloaded traffic.

For developers, prolonged service anomalies are no longer simple faults but have evolved into cracks between the world of code and business logic. They are forced to perform survival calculations under migration costs, whether they are flooding in or fleeing out, developers must face the aftershocks brought by DeepSeek's explosive growth.

One

After the mini-program backend was forced to shut down for three days during the Spring Festival, by the sixth day of the new year, to ensure the program operated normally, Lin Sen left DeepSeek, which he had deployed for over a year, and switched back to ChatGPT.

Even though the API call price had increased nearly tenfold, ensuring service stability had become a higher priority.

It is worth noting that for developers to leave DeepSeek and turn to other large models is not as easy as users switching models within an app. "Different large language models, or even different versions of the same language model, have subtle differences in their feedback results to prompts." Even though Lin Sen continued to call ChatGPT, migrating all key nodes from DeepSeek to ChatGPT and ensuring stable and high-quality content feedback still took him more than half a day.

The act of switching itself might only take two seconds, but "more developers take a week to repeatedly adjust prompts and retest when changing to a new model," Lin Sen told Wujie News.

For small developers like Lin Sen, the insufficient server capacity of DeepSeek is understandable, but if they could have been notified in advance, many losses could have been avoided, whether in terms of time costs or app maintenance costs.

After all, "Logging into the DeepSeek developer backend requires phone number registration, and a simple text message could have informed developers in advance." Now, these losses will be borne by the developers who supported them when DeepSeek was still relatively unknown.

When developers are deeply coupled with a large model platform, stability undoubtedly becomes an unspoken contract. A frequently fluctuating service interface is enough to make developers reassess their loyalty to the platform.

Just last year, when Lin Sen was calling the Mistral large model (a leading large model company in France), he experienced a billing system error that led to double payment. After he sent an email, Mistral corrected the issue in less than an hour and included a €100 voucher as compensation. Such responsiveness has fostered more trust in Lin Sen. Now, he has also migrated some of his services back to Mistral.

Yang Huichao, Technical Director of Yibiao AI, began contemplating an escape after the release of DeepSeek V3.

There is no need to use DeepSeek to write poetry or complaints; what if DeepSeek is used to write bids? Yang Huichao, responsible for the AI bidding project within the company, has already started looking for alternatives after DeepSeek launched its V3 version. For him, in the professional field of bidding, "DeepSeek's stability is increasingly insufficient."

The reasoning capabilities that made DeepSeek R1 popular do not attract Yang Huichao. After all, "As a developer, the main reasoning capabilities of software rely on programs and algorithms, not too much on the model's basic capabilities. Even using the oldest GPT 3.5, good results can be produced by relying on algorithms for correction; the model just needs to provide stable answers."

In actual usage, DeepSeek seems to Yang Huichao more like a clever but lazy "good student."

After upgrading to version V3, Yang Huichao found that DeepSeek had a higher success rate in answering some complex questions, but its stability had also reached an unacceptable level. "Now, when asking 10 questions, at least one output is unstable. In addition to the required generated content, DeepSeek often likes to go off on a tangent, generating irrelevant content."

For example, errors are not allowed in bids, and the results returned by the large model are often specified by developers to use a JSON structure (using instructions to call the large model each time to ensure stable returns of fixed fields) for data output, facilitating subsequent function calls. However, errors or inaccuracies can lead to failures in subsequent calls.

"DeepSeek R1 may have significantly improved reasoning capabilities compared to the previous V3 version, but its stability does not meet commercial standards," Yang Huichao mentioned on the @ProductivityMark account.

Caption: Glitches occurred during the generation process of DeepSeek V3. Image source: @ProductivityMark account

As one of the first users who joined during the DeepSeek-coder period in early 2024, Yang Huichao does not deny that DeepSeek is a good student; however, to ensure the quality and stability of generated bids, he can only turn his attention to other domestic large model companies that cater more to B-end users.

After all, DeepSeek, once dubbed the Pinduoduo of the AI world, quickly gathered a group of small and medium AI developers thanks to its cost-effectiveness. But now, to call DeepSeek directly and stably, local deployment is necessary. "Deploying a DeepSeek R1 costs between 300,000 to 400,000 yuan; if calculated using the online API, I could never use up 300,000 yuan in my lifetime."

Neither cheap enough nor stable enough, those like Yang Huichao, who find themselves unable to access the service, are leaving DeepSeek in droves.

Two

Once, Lin Sen and others were the first batch of steadfast users who chose DeepSeek.

In June 2024, when Lin Sen was developing his AI mini-program "Youth Listening to the World," he compared dozens of large model platforms both domestically and internationally. He needed to use a large model to process thousands of news articles daily, filtering and sorting to find suitable technology and nature news for teenagers, and processing the text of the news.

This not only required the large model to be smart but also affordable.

Involving the processing of thousands of news articles daily consumes a significant amount of tokens. For independent developer Lin Sen, the ChatGPT model is very expensive and only suitable for handling core tasks. For rapid filtering and analysis of large amounts of text, he still relies on other, lower-priced large models for support.

At the same time, whether it is the foreign Mistral, Gemini, or ChatGPT, calling them is quite cumbersome: you need to have a specific server abroad, set up a relay station, and also need to use a foreign credit card to purchase tokens.

Lin Sen was able to recharge his ChatGPT account using a friend's credit card from the UK. However, once the server is overseas, the API response speed may also experience delays, which led Lin Sen to turn his attention to domestic options in search of a ChatGPT alternative.

DeepSeek impressed Lin Sen greatly. "At that time, DeepSeek wasn't the most famous, but it had the most stable feedback." For example, with an API call every 10 seconds, other domestic large models might not return any content 30% of the time within 100 requests, but DeepSeek returned results every time and maintained a response quality comparable to that of ChatGPT and the large model platforms of BAT.

Compared to the API call prices of ChatGPT and BAT's large models, DeepSeek was indeed very cheap.

After delegating a large amount of news reading and preliminary analysis work to DeepSeek, Lin Sen found that the calling cost of DeepSeek was ten times lower than that of ChatGPT. After optimizing the instructions, the daily cost of calling DeepSeek dropped to just 2-3 yuan, "It may not be the best compared to ChatGPT, but DeepSeek's price is extremely low, making its cost-effectiveness very high for my project."

Caption: Lin Sen uses a large model to collect and analyze news (left) which is ultimately presented in the "Youth Listening to the World" mini-program (right). Image source: Provided by Lin Sen

Cost-effectiveness has become the primary reason for developers to choose DeepSeek. In 2023, Yang Huichao initially switched his company's AI project from ChatGPT to Mistral mainly to control costs. Then, in May 2024, when DeepSeek launched its V2 version, offering API calls at 2 yuan per million tokens, it undoubtedly delivered a dimensional blow to other large model vendors, prompting Yang Huichao to switch his company's AI bidding tool project to DeepSeek.

At the same time, after testing, Yang Huichao discovered that the BAT companies, which had already captured the B-end market through cloud services, had "too heavy a platform."

For a startup like Yibiao AI, choosing BAT would mean facing bundled consumption of cloud services. For Yang Huichao, who only needed to make simple calls to large model services, DeepSeek's API calls were undoubtedly more convenient.

In terms of migration costs, DeepSeek also had the upper hand.

Whether it was Lin Sen or Yang Huichao, the initial app development was based on OpenAI's interface. If they switched to BAT's large model platforms, they would have to redevelop the underlying system. However, DeepSeek is compatible with OpenAI-like interfaces, allowing for a "pain-free switch" to a new large model by simply changing the platform address, "in just one minute."

The Xiaochuang AI Q&A machine officially launched with DeepSeek on its first day of sales, assigning the roles of Chinese language and composition guidance among its five core roles to DeepSeek for construction.

As a partner, Lou Chi was also impressed by DeepSeek last June. "DeepSeek has excellent capabilities in Chinese comprehension; it was the only large model at that time that could recite 'The Yueyang Tower' without error." Lou Chi told Wujie News, compared to the more conventional and formulaic document-style outputs of other large models, using DeepSeek to teach children how to write essays often excelled in imaginative writing.

Before the social media craze of using DeepSeek to write poetry and science fiction, DeepSeek's elegant writing style had already caught the attention of the Xiaochuang AI team.

For developers, they are still looking forward to DeepSeek restoring its API calls. Currently, whether migrating to platforms that have deployed the full version of DeepSeek R1 or turning to other large model vendors, it seems to be a "difficult choice."

Three

However, competitors are striving to catch up with DeepSeek's unique strengths in deep reasoning.

Domestically, recently Baidu and Tencent have successively integrated deep thinking capabilities into their self-developed large models; internationally, OpenAI also urgently launched "Deep Research" in February, applying the reasoning capabilities of large models to online search, which will be available to Pro, Plus, and Team users. Google DeepMind also released the Gemini 2.0 model series in February, with the 2.0 Flash Thinking experimental version being a model enhanced for reasoning capabilities.

It is worth noting that DeepSeek still primarily focuses on text reading, but both ChatGPT and Gemini 2.0 have already introduced reasoning capabilities into multimodal support, accommodating various input modalities such as video, voice, documents, and images.

For DeepSeek, while catching up with multimodal capabilities, a greater challenge comes from competitors closing in on pricing.

On the cloud platform deployment side, many leading cloud vendors have chosen to integrate DeepSeek, both sharing traffic and binding customers through cloud services. In some respects, calling DeepSeek's large model has even become a "gift" tied to corporate cloud services.

Baidu's founder, Li Yanhong, recently stated that in the field of large language models, "the reasoning cost can be reduced by over 90% every 12 months."

With the trend of declining reasoning costs, it has become inevitable for BAT's API call prices to continue to drop, putting pressure on DeepSeek's cost-effectiveness advantage as it faces a new round of price wars from major companies.

However, the price war for large model APIs is just beginning; large model vendors are also competing in terms of service for developers.

Lin Sen has encountered many large model platforms, and he was particularly impressed by a certain tech giant that has dedicated account managers for client interactions. Whether there are instabilities or technical issues, they proactively contact developers.

Despite being an open-source large model platform aimed at providing more accessible AI support for developers, DeepSeek does not even have an entry on its official website for issuing invoices to developers.

"Every time after recharging the API, unlike other large model platforms where you can directly issue invoices in the backend, DeepSeek requires you to go outside the official website and add customer service on WeChat to issue invoices," Yang Huichao told Wujie News. Whether in terms of price or service, the label of DeepSeek's 'cost-effectiveness' seems to be somewhat unstable.

An AI product manager from a leading company told Wujie News that some internet company leaders insist on replacing their existing large models with DeepSeek, completely disregarding the time spent on adjusting prompts for the new model. Moreover, even the full version of DeepSeek R1 does not support many general capabilities like Function calling.

Compared to BAT, which has successfully run B-end service scenarios using cloud services, DeepSeek still lags behind in terms of convenience.

However, the traffic effect of DeepSeek has not yet faded, and many are still eager to ride the wave.

Some companies claim to have integrated DeepSeek, but they have only started calling the API and recharged a few hundred yuan. Some companies announce that they have deployed the DeepSeek model, but in reality, they have only had employees watch tutorials on Bilibili and downloaded a one-click installation package. In this wave of DeepSeek's popularity, there is a mix of quality and chaos.

The tide will eventually recede, but it is clear that DeepSeek has much more homework to do.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。