Author: PonderingDurian, Delphi Digital Researcher

Compiled by: Pzai, Foresight News

Given that cryptocurrencies are essentially open-source software with built-in economic incentives, and that AI is disrupting the way software is written, AI will have a significant impact on the entire blockchain space.

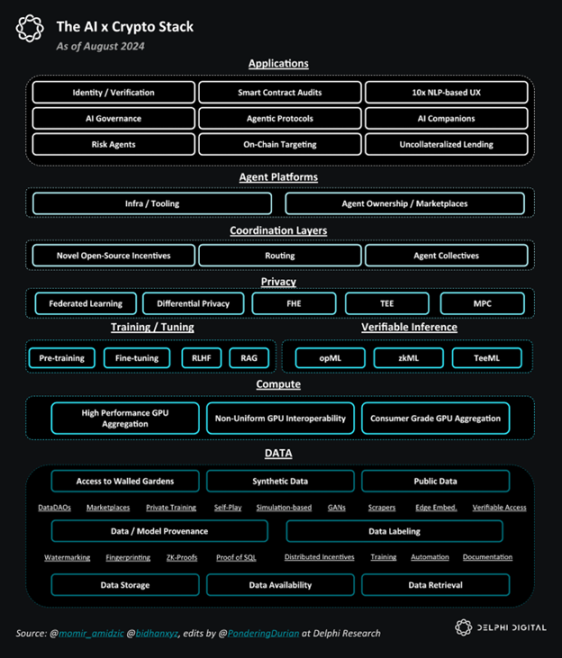

AI x Crypto Overall Stack

DeAI: Opportunities and Challenges

In my view, the biggest challenge facing DeAI lies in the infrastructure layer, as building foundational models requires substantial funding, and the scale returns on data and computation are also very high.

Considering the law of scaling, tech giants have a natural advantage: during the Web2 phase, they reaped enormous profits from monopolistic profits by aggregating consumer demand and reinvested these profits into cloud infrastructure over a decade of artificially low rates. Now, internet giants are attempting to capture the AI market by dominating data and computation (key elements of AI):

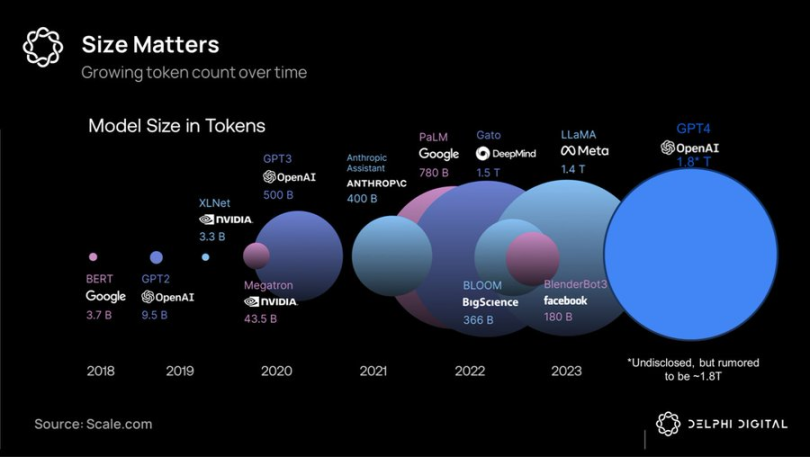

Token volume comparison of large models

Due to the capital intensity and high bandwidth requirements of large-scale training, unified superclusters remain the best choice—providing tech giants with the best-performing closed-source models—they plan to rent these models for monopolistic profits and reinvest the earnings into each subsequent generation of products.

However, it has proven that the moat in the AI field is shallower than the Web2 network effects, and leading frontier models quickly depreciate relative to the field, especially as Meta adopts a "scorched earth policy," investing billions of dollars in developing open-source frontier models like Llama 3.1, which have reached SOTA levels.

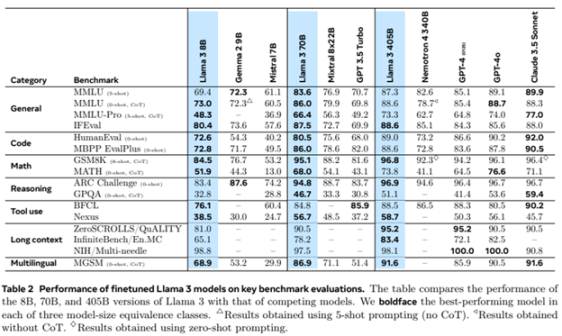

Llama 3 large model ratings

At this point, emerging research on low-latency decentralized training methods may commoditize (some) frontier business models—as smart pricing declines, competition may (at least partially) shift from hardware superclusters (favoring tech giants) to software innovation (slightly favoring open-source/cryptocurrency).

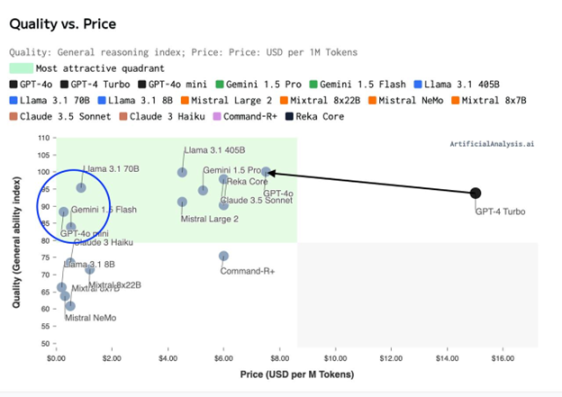

Capability Index (Quality) - Training Price Distribution

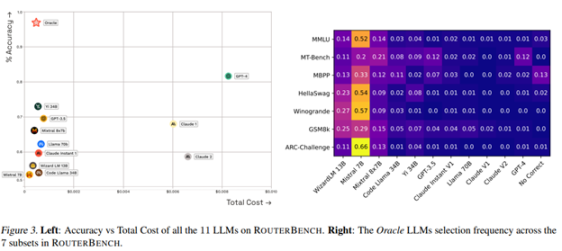

Considering the computational efficiency of "mixture of experts" architecture and large model synthesis/routing, we are likely facing not just a world of 3-5 giant models, but a world composed of millions of models with different cost/performance trade-offs. An interwoven intelligent network (hive).

This creates a significant coordination problem: blockchain and cryptocurrency incentive mechanisms should be well-suited to help solve this issue.

Core DeAI Investment Areas

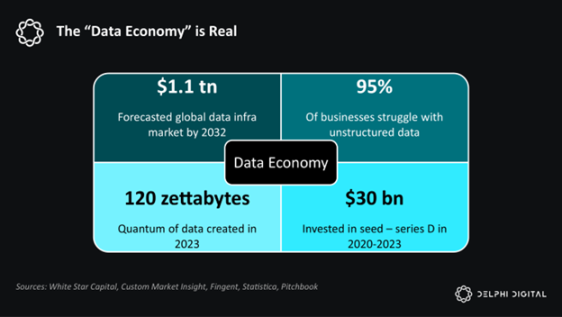

Software is eating the world. AI is eating software. And AI is fundamentally about data and computation.

Delphi is optimistic about the various components in this stack:

Simplified AI x Crypto Stack

Infrastructure

Given that AI's power comes from data and computation, DeAI infrastructure is dedicated to procuring data and computation as efficiently as possible, typically employing cryptocurrency incentive mechanisms. As mentioned earlier, this is the most challenging part of the competition, but considering the scale of the end market, it may also be the most rewarding part.

Computation

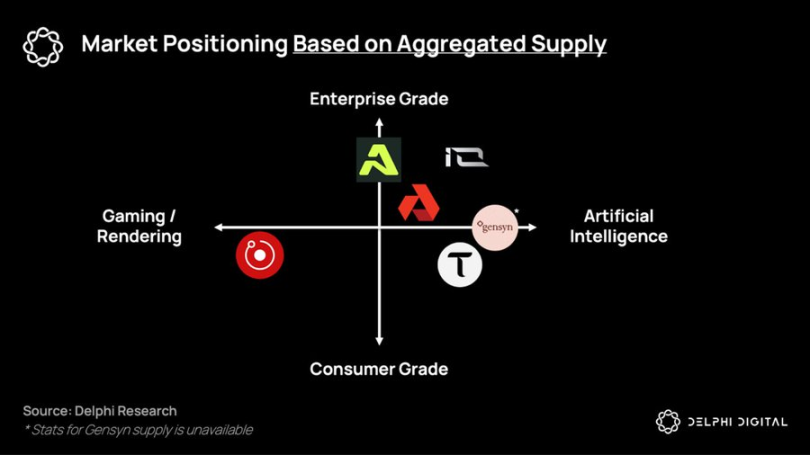

So far, distributed training protocols and the GPU market have been constrained by latency, but they hope to coordinate potential heterogeneous hardware to provide cost-effective, on-demand computing services for those shut out by the giants' integrated solutions. Companies like Gensyn, Prime Intellect, and Neuromesh are advancing distributed training, while io.net, Akash, and Aethir are enabling low-cost inference closer to edge intelligence.

Project ecological niche distribution based on aggregated supply

Data

In a ubiquitous intelligent world based on smaller, more specialized models, the value and monetization of data assets are increasing.

So far, DePIN has been praised largely for its ability to build lower-cost hardware networks compared to capital-intensive enterprises (like telecom companies). However, DePIN's biggest potential market will emerge in the collection of new types of datasets that will flow into on-chain intelligent systems: agent protocols (to be discussed later).

In this world, the largest potential market—the labor force—is being replaced by data and computation. In this world, DeAI infrastructure provides a way for non-technical individuals to seize the means of production and contribute to the upcoming network economy.

Middleware

The ultimate goal of DeAI is to achieve effective composable computing. Just as DeFi's capital Lego, DeAI compensates for today's absolute performance shortcomings through permissionless composability, incentivizing an open ecosystem of software and computational primitives that compound over time, thereby (hopefully) surpassing existing software and computational primitives.

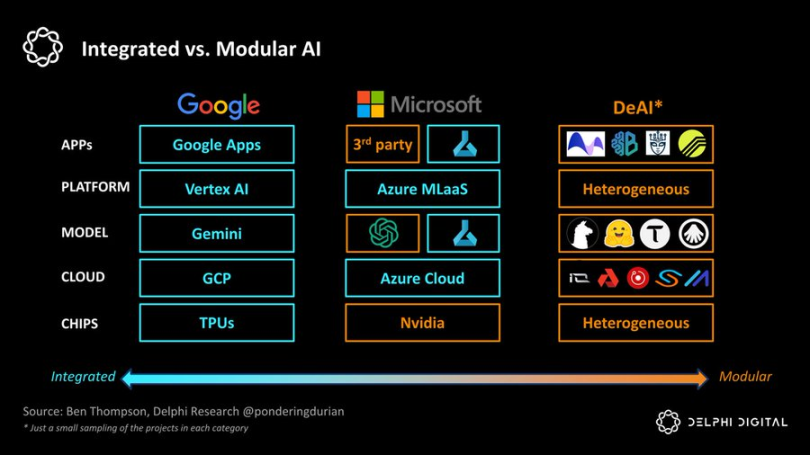

If Google represents the extreme of "integration," then DeAI represents the extreme of "modularity." As Clayton Christensen reminds us, in emerging industries, integrated approaches often gain a lead by reducing friction in the value chain, but as the field matures, modular value chains will carve out a place by enhancing competition and cost efficiency across the layers of the stack:

Integrated vs. Modular AI

We are very optimistic about several categories that are crucial to realizing this modular vision:

Routing

In a fragmented intelligent world, how can one choose the right model and timing at the best price? Demand-side aggregators have been capturing value (see Aggregation Theory), and routing functionality is critical for optimizing the Pareto curve between performance and cost in the intelligent networked world:

Bittensor has been leading in the first generation of products, but many specialized competitors have also emerged.

Allora hosts competitions between different models across various "themes" in a "context-aware" and self-improving manner, informing future predictions based on historical accuracy under specific conditions.

Morpheus aims to be the "demand-side router" for Web3 use cases—essentially a type of open-source local agent capable of grasping the relevant context of users and effectively routing queries through emerging components of DeFi or Web3's "composable computing" infrastructure, akin to "Apple Intelligence."

Interoperability protocols for agents, such as Theoriq and Autonolas, aim to push modular routing to the extreme, making the composable, composite ecosystem of flexible agents or components a fully mature on-chain service.

In summary, in a rapidly fragmenting intelligent world, supply and demand aggregators will play an extremely powerful role. If Google is a $2 million company indexing information for the world, then the winners of demand-side routers—whether Apple, Google, or Web3 solutions—will be the companies indexing agent intelligence, creating even greater scale.

Co-processors

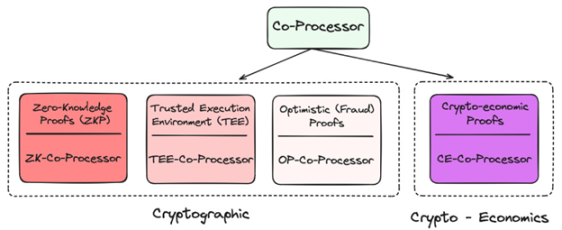

Given its decentralization, blockchain is significantly limited in both data and computation. How can we bring computation and data-intensive AI applications that users need onto the blockchain? Through co-processors!

Application layer of co-processors in Crypto

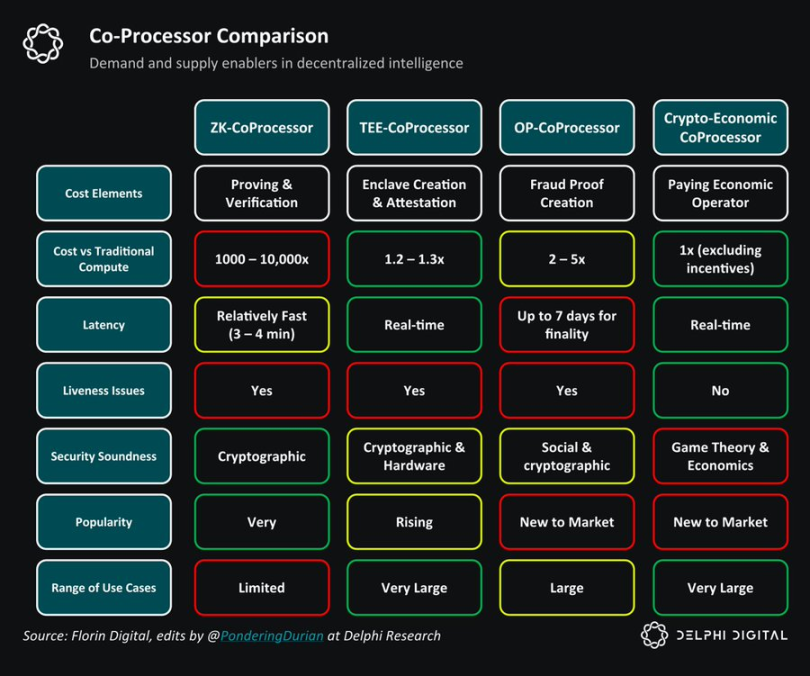

They all provide different technologies to "validate" the underlying data or model being used as "oracles," which can minimize new trust assumptions on-chain while significantly enhancing their capabilities. So far, many projects have utilized zkML, opML, TeeML, and cryptoeconomic methods, each with its own advantages and disadvantages:

Co-processor comparison

At a higher level, co-processors are crucial for the intelligence of smart contracts—providing solutions similar to "data warehouses" for querying more personalized on-chain experiences or verifying whether a given inference has been correctly completed.

TEE (Trusted Execution Environment) networks, such as Super, Phala, and Marlin, have recently gained popularity due to their practicality and ability to support large-scale applications.

Overall, co-processors are essential for integrating blockchains with high determinism but low performance with high-performance but probabilistic agents. Without co-processors, AI would not appear in this generation of blockchains.

Developer Incentives

One of the biggest issues with open-source AI development is the lack of incentive mechanisms that make it sustainable. AI development is highly capital-intensive, and the opportunity costs of computation and AI knowledge work are very high. Without proper incentives to reward open-source contributions, this field will inevitably lose to super-capitalist supercomputers.

From Sentiment to Pluralis, Sahara AI, and Mira, these projects aim to launch networks that enable decentralized individual networks to contribute to network intelligence while providing appropriate incentives.

Through compensation in business models, the compounding speed of open-source should accelerate—offering developers and AI researchers a global option outside of big tech companies, with the hope of receiving substantial rewards based on the value created.

While achieving this is very challenging and competition is intensifying, the potential market here is enormous.

GNN Models

Large language models identify patterns in large text corpora and learn to predict the next word, while Graph Neural Networks (GNNs) process, analyze, and learn from graph-structured data. Since on-chain data primarily consists of complex interactions between users and smart contracts, in other words, a graph, GNNs seem to be a reasonable choice to support on-chain AI use cases.

Projects like Pond and RPS are attempting to build foundational models for web3 that could be applied in trading, DeFi, and even social use cases, such as:

- Price Prediction: On-chain behavior models predicting prices, automated trading strategies, sentiment analysis

- AI Finance: Integration with existing DeFi applications, advanced yield strategies and liquidity utilization, better risk management/governance

- On-chain Marketing: More targeted airdrops/positioning, recommendation engines based on on-chain behavior

These models will heavily utilize data warehouse solutions like Space and Time, Subsquid, Covalent, and Hyperline, which I am also very optimistic about.

GNNs can prove that large models on the blockchain and Web3 data warehouses are essential auxiliary tools, providing OLAP (Online Analytical Processing) capabilities for Web3.

Applications

In my view, on-chain agents may be key to solving the well-known user experience issues in cryptocurrency, but more importantly, over the past decade, we have invested billions into Web3 infrastructure, yet the utilization rate from the demand side is pitifully low.

Don't worry, agents are here…

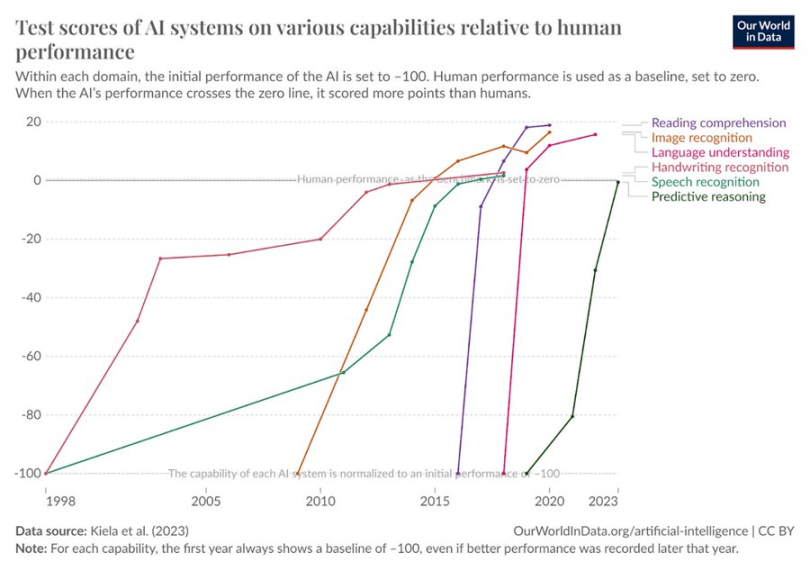

AI's test score growth across various dimensions of human behavior

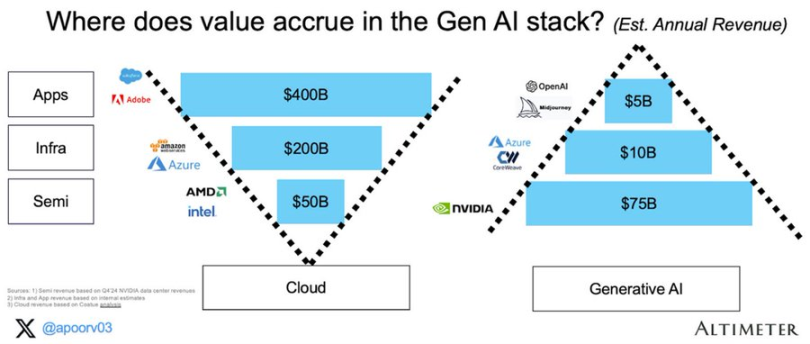

These agents leverage open, permissionless infrastructure—crossing payments and composable computing to achieve more complex end goals, which also seems logical. In the upcoming networked intelligent economy, economic flow may no longer be B -> B -> C, but rather user -> agent -> computing network -> agent -> user. The ultimate result of this flow is agent protocols. The expenses of application or service-oriented enterprises are limited, primarily operating on-chain resources, and the cost of meeting end-user (or mutual) needs in a composable network is far lower than that of traditional enterprises. Just as the application layer of Web2 captured most of the value, I am also a proponent of the "fat agent protocol" theory in DeAI. Over time, value capture should shift to the upper layers of the stack.

Value accumulation in generative AI

The next Google, Facebook, and Blackrock are likely to be agent protocols, and the components to realize these protocols are being born.

DeAI Endgame

AI will change our economic landscape. Today, the market expects this value capture to be limited to a few large companies on the North American West Coast. DeAI represents a different vision. A vision of an open, composable intelligent network that rewards and compensates even the smallest contributions, along with more collective ownership/management.

While some claims about DeAI are overly exaggerated, and many projects are trading at prices significantly higher than their current actual driving force, the scale of opportunity is indeed objective. For those who are patient and visionary, the ultimate vision of truly composable computing in DeAI may prove the rationale for blockchain itself.

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。