撰文:Mario Gabriele

编译:Block unicorn

人工智能的圣战

我宁愿把我的一生过得像有上帝一样,等到死才发现上帝是不存在的,也不愿意过得像没有上帝一样,等到死才发现上帝是存在的。——布莱兹·帕斯卡

宗教是一件有趣的事情。可能因为它在任何方向上都是完全无法证明的,也可能就像我最喜欢的一句话:「你不能用事实来对抗感情。」

宗教信仰的特点是,在信仰上升的过程中,它们以一种难以置信的速度加速发展,以至于几乎无法怀疑上帝的存在。当你周围的人越来越相信它时,你怎么能怀疑一个神圣的存在呢?当世界围绕一个教义重新排列自己时,哪里还有异端的立足之地?当寺庙和大教堂、法律和规范都按照一种新的、不可动摇的福音来安排时,哪里还有反对的空间呢?

当亚伯拉罕宗教首次出现并传播到各大洲时,或者佛教从印度传播到整个亚洲时,信仰的巨大动能创造了一个自我强化的循环。随着更多的人皈依,并围绕这些信仰建立了复杂的神学体系和仪式,质疑这些基本前提变得越来越困难。在一片轻信的海洋中,成为异端并不容易。宏伟的教堂、复杂的宗教经文以及繁荣的修道院,都作为神圣存在的物理证据。

但宗教的历史也告诉我们,这样的结构是多么容易崩溃。随着基督教传播到斯堪的纳维亚半岛,古老的北欧信仰在仅仅几代人的时间里就崩溃了。古埃及的宗教体系持续了数千年,最终在新的、更持久的信仰崛起并在更大的权力结构出现时消失了。即便是在同一种宗教内部,我们也看到了戏剧性的分裂——宗教改革撕裂了西方基督教,而大分裂则导致了东西教会的分裂。这些分裂往往从看似微不足道的教义分歧开始,逐渐演变成完全不同的信仰体系。

圣典

上帝是超越所有智力思维层次的隐喻。就是这么简单。——约瑟夫·坎贝尔

简单地说,相信上帝就是宗教。也许创造上帝也没什么不同。

自诞生以来,乐观的人工智能研究人员就将他们的工作想象成神创论——即上帝的创造。在过去几年中,大型语言模型 (LLMs) 的爆炸式发展,进一步的坚定了信徒们的信念,认为我们正走在一条神圣的道路上。

它也证实了 2019 年写的一篇博客文章。尽管在人工智能领域外的人们直到最近才知道它,但加拿大计算机科学家理查德·萨顿的《苦涩的教训》已成为社区中越来越重要的文本,从隐秘的知识逐渐演变成一种新的、包罗万象的宗教基础。

在 1,113 个字中(每个宗教都需要神圣的数字),萨顿总结了一项技术观察:「从 70 年的人工智能研究中可以学到的最大教训是,利用计算的通用方法最终是最有效的,而且是巨大的优势。」人工智能模型的进步得益于计算资源的指数级增加,乘着摩尔定律的巨大波浪。与此同时,萨顿指出,人工智能研究中许多工作集中在通过专门的技术来优化性能——增加人类知识或狭窄的工具。尽管这些优化可能在短期内有所帮助,但在萨顿看来,它们最终是浪费时间和资源的,犹如在一个巨大的浪潮到来时,去调节冲浪板的鳍或尝试新的蜡。

这就是我们所谓的「苦涩宗教」的基础。它只有一条戒律,社区中通常称之为「扩展法则」:指数级增长的计算推动性能;其余都是愚蠢的。

苦涩宗教从大型语言模型(LLMs)扩展到世界模型,现在正通过生物学、化学和具身智能(机器人学和自动驾驶车辆)这些未被转化的圣殿迅速传播。

然而,随着萨顿学说的传播,定义也开始发生变化。这是所有活跃而充满生命力的宗教的标志——争论、延伸、注释。「扩展法则」不再仅仅意味着扩展计算(方舟不只是一艘船),它现在指的是各种旨在提升变压器和计算性能的方法,其中还带有一些技巧。

现在,经典囊括了优化 AI 堆栈每个部分的尝试,从应用于核心模型本身的技巧(合并模型、专家混合 (MoE) 和知识提炼)一直到生成合成数据来喂养这些永远饥饿的神,其间还进行了大量的实验。

交战的教派

最近,人工智能社区中掀起的一个问题,带有圣战的气息,就是「苦涩的宗教」是否仍然正确。

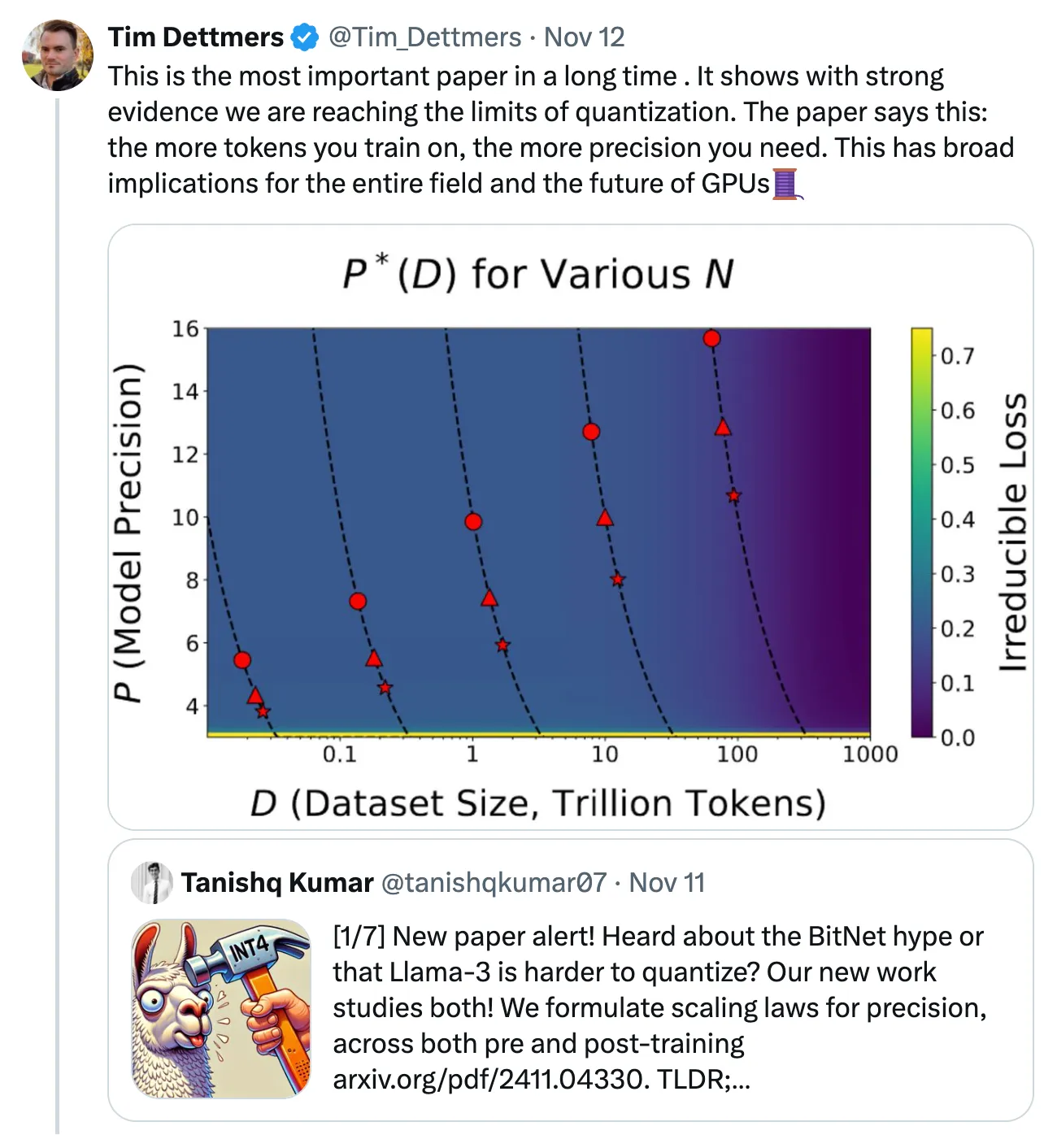

本周,哈佛大学、斯坦福大学和麻省理工学院发表了一篇名为《精度的扩展法则》的新论文,引发了这场冲突。该论文讨论了量化技术效率增益的终结,量化是一系列改善人工智能模型性能并对开源生态系统大有裨益的技术。艾伦人工智能研究所的研究科学家 Tim Dettmers 在下面的帖子中概述了它的重要性,称其为「很长一段时间以来最重要的论文」。它代表了过去几周不断升温的对话的延续,并揭示了一个值得注意的趋势:两个宗教的日益巩固。

OpenAI 首席执行官 Sam Altman 和 Anthropic 首席执行官 Dario Amodei 属于同一个教派。两人都自信满满地表示,我们将在未来大约 2-3 年内实现通用型人工智能 (AGI)。Altman 和 Amodei 都可以说是最依赖「苦涩宗教」神圣性的两位人物。他们的所有激励措施都倾向于过度承诺,制造最大的炒作,以在这个几乎完全由规模经济主导的游戏中积累资本。如果扩展法则不是「阿尔法与欧米伽」,最初和最后、开始和结束,那么你需要 220 亿美元做什么?

前 OpenAI 首席科学家 Ilya Sutskever 坚持不同的一套原则。他与其他研究人员(包括许多来自 OpenAI 内部的研究人员,根据最近泄密的信息)一起认为,扩展正在接近上限。这个团体认为,要维持进展并将 AGI 带入现实世界,必然需要新的科学和研究。

Sutskever 派合理地指出,Altman 派的持续扩展理念在经济上是不可行的。正如人工智能研究员 Noam Brown 所问:「毕竟,我们真的要训练花费数千亿美元或数万亿美元的模型吗?」这还不包括如果我们将计算扩展从训练转移到推理所需的额外数十亿美元的推理计算支出。

但真正的信徒非常熟悉对手的论点。你家门口的传教士能够轻松应对你的享乐主义三难困境。对于 Brown 和 Sutskever 而言,Sutskever 派指出了扩展「测试时计算」的可能性。与迄今为止的情况不同,「测试时计算」不是依靠更大的计算来改进训练,而是将更多的资源用于执行。当人工智能模型需要回答你的问题或生成一段代码或文本时,它可以提供更多的时间和计算。这相当于将你的注意力从备考数学转移到说服老师给你多一个小时并允许你带计算器。对于生态系统中的许多人来说,这是「苦涩宗教」的新前沿,因为团队正在从正统的预训练转向后训练 / 推理的方法。

指出其他信仰体系的漏洞,批判其他教义而不暴露自己的立场,这倒是很容易。那么,我自己的信仰是什么呢?首先,我相信当前这一批模型会随着时间的推移带来非常高的投资回报。随着人们学会如何绕过限制并利用现有的 API,我们将看到真正创新的产品体验的出现并取得成功。我们将超越人工智能产品的拟物化和增量阶段。我们不应将其视为「通用型人工智能」(AGI),因为那种定义存在框架上的缺陷,而应看作是「最小可行智能」,能够根据不同的产品和使用场景进行定制。

至于实现超级人工智能(ASI),则需要更多的结构。更明确的定义和划分将帮助我们更有效地讨论各自可能带来的经济价值与经济成本之间的权衡。例如,AGI 可能为一部分用户提供经济价值(仅仅是一个局部的信仰体系),而 ASI 则可能展现出不可阻挡的复合效应,并改变世界、我们的信仰体系以及我们的社会结构。我不认为仅凭扩展变压器就能实现 ASI;但遗憾的是,正如有些人可能会说的那样,这只是我的无神论信仰。

失落的信仰

人工智能社区无法在短期内解决这场圣战;在这场情感的争斗中没有可以拿出来的事实依据。相反,我们应该将注意力转向人工智能质疑其对扩展法则的信仰意味着什么。信仰的丧失可能会引发连锁反应,超越大型语言模型(LLMs),影响所有行业和市场。

必须指出的是,在人工智能 / 机器学习的大多数领域,我们尚未彻底探索扩展法则;未来还会有更多的奇迹。然而,如果怀疑真的悄然出现,那么对于投资者和建设者来说,将变得更加困难,难以对像生物技术和机器人等「曲线早期」类别的终极性能状态保持同样高的信心。换句话说,如果我们看到大型语言模型开始放缓并偏离被选定的道路,那么许多创始人和投资者在相邻领域的信仰体系将崩塌。

这是否公平是另一个问题。

有一种观点认为,「通用型人工智能」自然需要更大的规模,因此,专业化模型的「质量」应该在较小的规模上展现,从而使它们在提供实际价值之前不容易遇到瓶颈。如果一个特定领域的模型只摄取一部分数据,因此只需要一部分计算资源来达到可行性,么它难道不应该有足够的改进空间吗?这从直觉上看是有道理的,但我们反复发现,关键往往不在于此:包括相关或看似不相关的数据,常常能够提高看似不相关的模型的性能。例如,包括编程数据似乎有助于提升更广泛的推理能力。

从长远来看,关于专业化模型的争论可能是无关紧要的。任何构建 ASI(超级人工智能)的人,最终的目标很可能是一个能够自我复制、自我改进的实体,具备在各个领域内发挥无限的创造力。Holden Karnofsky,前 OpenAI 董事会成员及 Open Philanthropy 创始人,称这种创造物为「PASTA」(自动化科学和技术进步的过程)。Sam Altman 的原始盈利计划似乎依赖于类似的原则:「构建 AGI,然后询问它如何获得回报。」这是末世论的人工智能,是最终的命运。

像 OpenAI 和 Anthropic 这样的大型 AI 实验室的成功,激发了资本市场支持类似「X 领域的 OpenAI」实验室的热情,这些实验室的长期目标是围绕在其特定垂直行业或领域内构建「AGI」。这种规模分解的推断将导致范式转变,远离 OpenAI 模拟,转向以产品为中心的公司——这我在 Compound 的 2023 年年会上提出了这种可能性。

与末世论模型不同,这些公司必须展示一系列的进展。它们将是基于规模工程问题建立的公司,而不是进行应用研究的科学组织,最终目标是构建产品。

在科学领域,如果你知道自己在做什么,那你就不应该做这件事。在工程领域,如果你不知道自己在做什么,那你也不应该做这件事。——理查德·汉明

信徒们不太可能在短期内失去他们的神圣信仰。如前所述,随着宗教的激增,它们编纂了一套生活和崇拜的剧本和一套启发式方法。它们建造了实体的纪念碑和基础设施,加强了他们的力量和智慧,并表明它们「知道自己在做什么」。

在最近的一次采访中,Sam Altman 谈到 AGI 时说了这样的话(重点是我们):

这是我第一次觉得我们真的知道该做什么。从现在到构建一个 AGI仍然需要大量的工作。我们知道有一些已知的未知数,但我认为我们基本上知道该做什么,这将需要一段时间;这会很困难,但这也非常令人兴奋。

审判

在质疑《苦涩的宗教》时,扩展怀疑论者正在清算过去几年最深刻的讨论之一。我们每个人都曾以某种形式进行过这样的思考。如果我们发明了上帝,会发生什么?那个上帝会多快出现?如果 AGI(通用型人工智能)真的、不可逆地崛起,会发生什么?

像所有未知且复杂的话题一样,我们很快就将自己的特定反应存储在大脑中:一部分人对它们即将变得无关紧要感到绝望,大多数人则预计会是毁灭和繁荣的混合,最后的一部分人则预计人类会做我们最擅长的事情,继续寻找要解决的问题并解决我们自己创造的问题,从而实现纯粹的富足。

任何有很大利害关系的人都希望能够预测,如果扩展定律成立,并且 AGI 在几年内到来,世界对他们来说会是什么样子。你将如何侍奉这个新的上帝,这个新的上帝又将如何服务于你?

但是,如果停滞的福音赶走了乐观主义者,该怎么办呢?如果我们开始认为,也许连上帝都会衰退,该怎么办呢?在之前的一篇文章《机器人 FOMO、规模定律与技术预测》中,我写道:

我有时会想,如果扩展定律不成立会发生什么,这是否会与收入流失、增长放缓和利率上升对许多技术领域的影响相似。我有时还会想,扩展定律是否完全成立,这是否会与许多其他领域的先行者及其价值获取的商品化曲线相似。

「资本主义的好处在于,无论怎样,我们都会花费大量的钱来找出答案。」

对创始人和投资者来说,问题变成了:接下来会发生什么?每个垂直领域中有可能成为伟大产品构建者的候选人正在逐渐为人所知。个行业中还会有更多这样的人,但这个故事已经开始上演。新的机遇又从何而起呢?

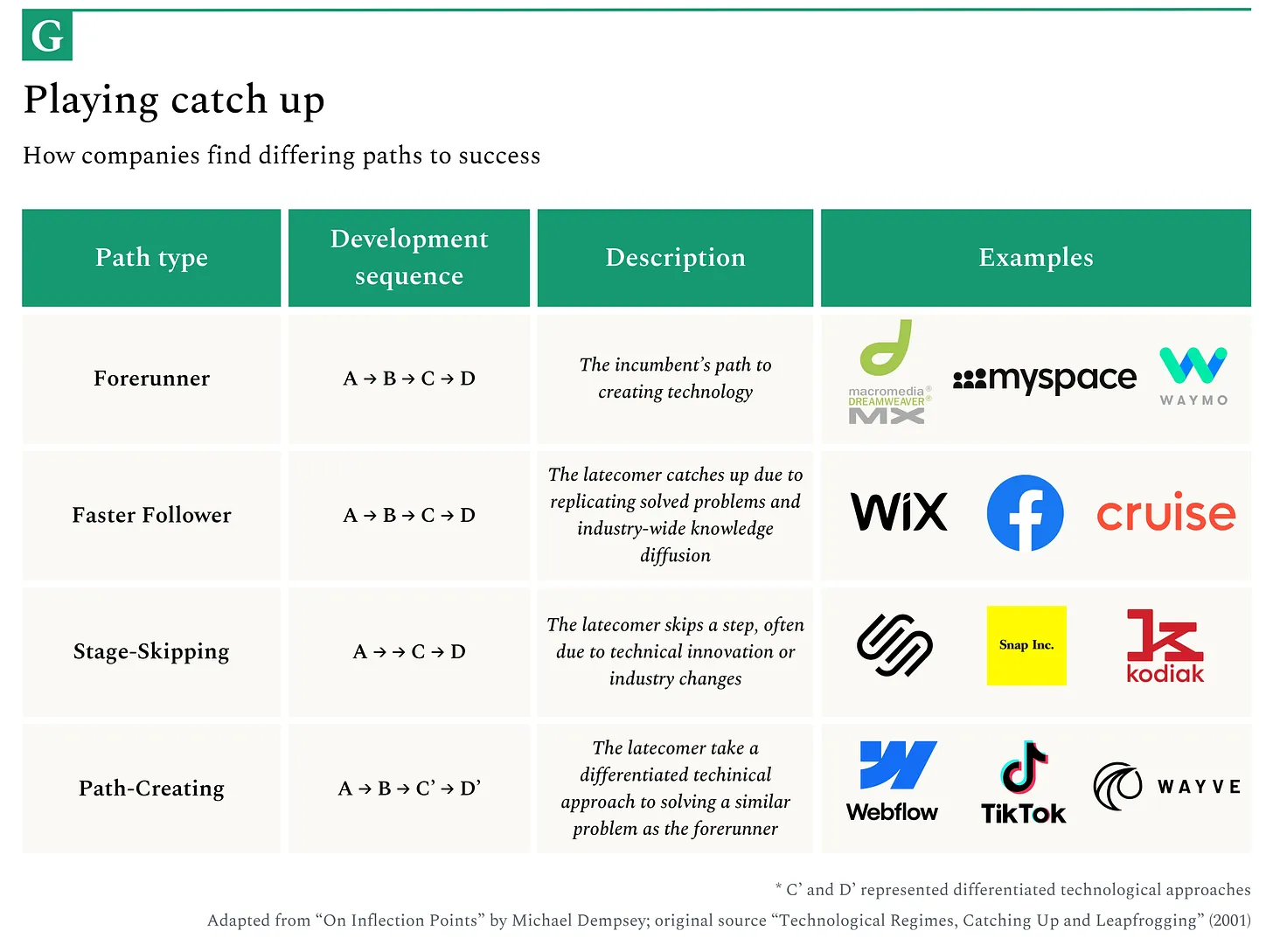

如果扩展停滞,我预计将看到一波倒闭和合并的浪潮。剩下的公司将越来越多地将重点转向工程,这一进化我们应当通过跟踪人才流动来预见。我们已经看到一些迹象表明,OpenAI 正朝着这个方向发展,因为它越来越多地将自己产品化。这一转变将为下一代创业公司开辟空间,通过依赖创新的应用研究和科学,而非工程,进行「弯道超车」,在开辟新路径的尝试中超越现有企业。

宗教的教训

我对技术的看法是,任何看起来明显具有复利效应的事物通常都不会持续很长时间,而每个人普遍认为的一个观点是,任何看起来明显具有复利效应的业务,奇怪地以远低于预期的速度和规模发展。

宗教分裂的早期迹象通常遵循可预测的模式,这些模式可以作为框架,继续追踪《苦涩的宗教》的演变。

它通常始于出现相互竞争的解释,无论是出于资本主义的还是意识形态的原因。在早期的基督教中,关于基督的神性和三位一体的本质的不同观点导致了分裂,产生了截然不同的圣经解释。除了我们已经提到的 AI 的分裂,还有其他正在出现的裂痕。例如,我们看到一部分 AI 研究人员拒绝了变换器的核心正统观念,而转向了其他架构,如状态空间模型(State Space Models)、Mamba、RWKV、液体模型(Liquid Models)等。虽然这些现在还只是软信号,但它们显示出异端思想的萌芽以及从基础原则重新思考这一领域的意愿。

随着时间的推移,先知不耐烦的言论也会导致人们的不信任。当宗教领袖的预言没有实现,或者神的干预没有如约而至时,它就会种下怀疑的种子。

米勒派运动曾预言基督将在 1844 年回归,但当耶稣没有按计划到来时,该运动就土崩瓦解了。在科技界,我们通常会默默埋葬失败的预言,并允许我们的先知继续描绘乐观的、长周期的未来版本,尽管预定的截止日期一再错过(嗨,Elon)。然而,如果没有通过持续改进的原始模型性能来支撑,扩展定律的信仰也可能面临类似的崩溃。

一个腐败、臃肿或不稳定的宗教容易受到叛教者的影响。新教改革运动之所以能取得进展,不仅仅是因为路德的神学观点,更因为它出现在天主教会衰落和动荡的时期。当主流机构出现裂痕时,长期存在的「异端」思想就会突然找到肥沃的土壤。

在人工智能领域,我们可能会关注规模较小的模型或替代方法,它们以更少的计算或数据实现类似的结果,例如来自各种中国企业实验室和开源团队(如 Nous Research)所做的工作。那些突破生物智能极限、克服长期被认为无法逾越的障碍的人,也可能会创建一个新的叙事。

观察转变开始的最直接、最具时效性的方式是跟踪从业者的动向。在任何正式的分裂之前,宗教学者和神职人员通常会在私下里保持异端观点,而在公众面前却表现得顺从。当今的对应现象可能是一些 AI 研究人员,他们表面上遵循扩展定律,但暗地里却在追求截然不同的方法,等待适当时机挑战共识,或离开他们的实验室,去寻找理论上更广阔的天地。

关于宗教和技术正统的棘手之处在于,它们往往有一部分是正确的,只是并不像最忠实的信徒所认为的那样普遍正确。就像宗教将人类的基本真理融入它们的形而上学框架中一样,扩展定律清楚地描述了神经网络学习的真实情况。问题在于,这种现实是否像当前的热情所暗示的那样完全且不变,以及这些宗教机构(人工智能实验室)是否足够灵活、足够有策略性,能够带领狂热分子一起前行。同时,建立出能够让知识传播的印刷机(聊天界面和 API),让他们的知识得以传播。

终局

「宗教在普通民众眼中是真实的,在智者眼中是虚假的,在统治者眼中是有用的。」——卢修斯·安纳乌斯·塞内加

对宗教机构的一种可能过时的观点是,一旦它们达到一定规模,它们就会像许多人类经营的组织一样,容易屈服于生存的动机,试图在竞争中存活下来。在此过程中,它们忽视了真理和伟大的动机(这两者并非互相排斥)。

我曾撰写过一篇文章关于资本市场如何成为叙事驱动的信息茧房,而激励机制往往会使这些叙事得以延续。扩展定律的共识给人一种不祥的相似感——一种根深蒂固的信仰体系,它在数学上优雅且在协调大规模资本部署上极其有用。就像许多宗教框架一样,它可能作为一种协调机制比作为一种基本真理更有价值。

免责声明:本文章仅代表作者个人观点,不代表本平台的立场和观点。本文章仅供信息分享,不构成对任何人的任何投资建议。用户与作者之间的任何争议,与本平台无关。如网页中刊载的文章或图片涉及侵权,请提供相关的权利证明和身份证明发送邮件到support@aicoin.com,本平台相关工作人员将会进行核查。